Logging. Everybody does it, nobody thinks about it. It’s one of those things that grows organically, responding to the needs of the moment. A developer has a problem, adds a log statement, watches it for a while, then moves on. Logs become your application’s stream-of-consciousness, as if it were narrating its own life:

“Well, look at that! It’s 2:30 p.m., and I just got an HTTP request …

“It’s from somebody named ‘bob1984’ …

“Hey, I know that guy! He’s User 571277! Great to see you, Bob!

“So, what can I do for you? Update Story 754? No problem.

“Boom! All done. By the way, that took me 250 milliseconds. Have a nice day.”

Obviously an exaggeration, but in terms of the information conveyed it’s not that different from:

14:30:00.100 Received POST /story/754

14:30:00.120 Auth User bob1984 (ID: 571277)

14:30:00.350 Response HTTP 200 OK (250ms)

This style of logging is natural and intuitive. For a developer, it’s easy to watch the logs in real-time while interacting with a local instance of the application.

Most logging libraries have APIs oriented around logging strings, a tacit endorsement of this pattern. (See Java, Go, or Python for examples.) Unfortunately, the conversational logging style breaks down pretty quickly once your software is deployed to production.

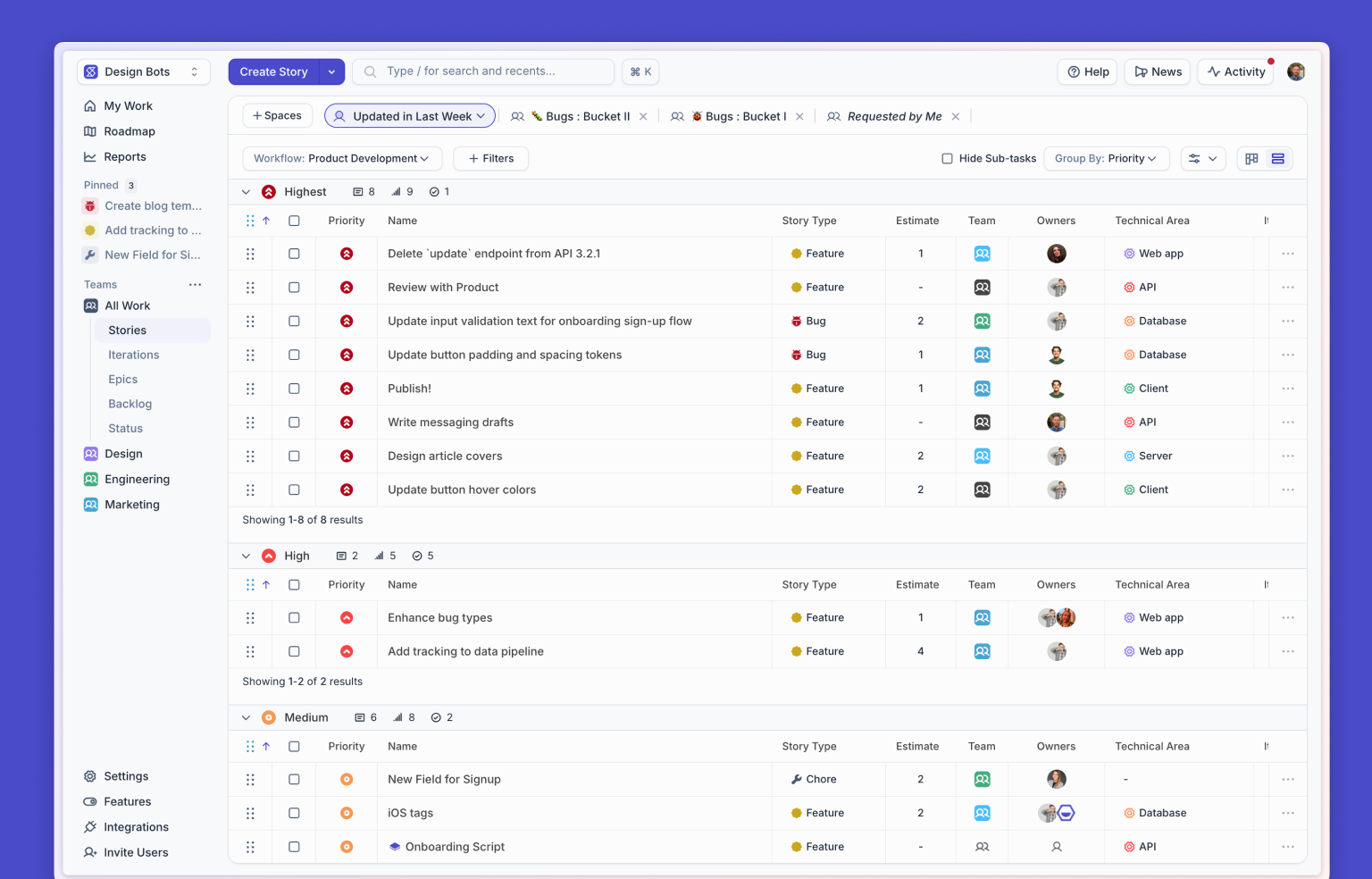

In Shortcut’s early years, we went through the usual stages of logging. Our logs started as strings written to local files. When it became too cumbersome to search files across dozens of machines, we started shipping logs to a centralized service. As the need arose for more automated monitoring, the log messages acquired more numeric data such as request IDs and time measurements.

Still, our log messages were fundamentally just text, limiting us to text-based tools. Even in the centralized logging service, our search capabilities were not much more sophisticated than the classic grep. In order to analyze the behavior of our systems beyond the scope of a single request, a developer would have to write a script to find all the relevant log messages, parse out the relevant data, and export it to a structured format such as CSV. This was tedious at best. At worst, it was simply impossible to answer the questions we wanted to ask.

At this point, there’s a fork in the road, and which path you take depends on what kind of organization you work for. If you’re stuck with a “toss it over the wall” mentality between Development and Operations, then you risk creating a lot of redundant work: Development teams write code that logs strings, Ops teams write code to parse those strings into structured data. It takes time to gather new information from the logs and computing power to extract it. If a developer inadvertently changes the wording of a log message, the downstream parsers break and everyone starts blaming the other side. The whole logging pipeline is slow, expensive, and fragile.

On the happier path, where developers and operations collaborate to make each other’s lives easier, you can sidestep this whole circus by generating logs as structured data from the start. I’m happy to say that this was the path we took at Shortcut.

Logs as data

As we recognized a need for structured data about our systems, we started exploring the range of tools and services that could help. Suffice to say, there are dozens of them, at every price point between “free” and “call for an estimate.” We’ll talk more about the specific tools we chose in a future article, but we knew, regardless of which tools we chose, we would need to get our logs into a structured data format. The next question we had to answer was: What format? We considered a few options:

- Fixed columns

- key=value pairs

- EDN

- JSON

The fixed-column format is standard for web servers, where it’s known as Common Log Format, and a lot of tools know how to parse it. But it only describes web requests. We wanted to log data from a variety of different sources with different fields, not a fixed set of columns, so that was out.

Space-separated key=value pairs are the default format for some analysis tools, such as Splunk, and is semi-codified as logfmt. It has the virtue of being relatively compact and easy to read in its raw form. But despite wide use, there isn't a formal specification for this format, and different implementations can have inconsistent behavior regarding quoting.

Most of our backend is written in Clojure, so Extensible Data Notation (EDN) was attractive from that point of view. Sadly, though, support in third-party tools is all but nonexistent.

And so we come back, as always, to JSON. It's not the most compact or human-readable format, but it is the most universally and consistently supported machine-readable format. When future aliens visit Earth to explore the ruins of human civilization, their Rosetta Stone will be a hard disk containing JSON alongside XML and CSV.

How to structure logs

As we surveyed the landscape of log-analysis tools, we noticed that a lot of them had similar constraints:

- Any dimension you want to search or filter by must be represented by a field in the raw data. Adding new fields after the fact is expensive or not possible.

- JOINs across different data sources are not possible, or slow enough to be impractical over large result sets.

- Most nested structures, such as JSON Objects or Lists, will be “flattened” into simple key/value pairs for indexing.

We didn’t know upfront all the fields we might need, so we couldn't make a complete schema from the start. But we could choose a few common fields that we wanted every log message to have.

Part 1: What happened?

Our most important field is tag. The value of tag is a short string that identifies what kind of message it is. (An alternative name might have been type, but the word type is already so overloaded that we wanted to avoid it.) The tag also indicates what other fields we expect to see in that message.

To figure out what tags we needed, we started with the existing string-based log messages and tried to answer “What is the essential information this log message wants to convey?” (Some examples: api_response, user_created, slack_notification_sent.) Then we added fields to express all the information that was in the original string. So a message like this:

UPDATE STORY by User 5678 for Org 1234 response 200 OK (250ms)

Became structured data like this:

{

"tag":"story_updated",

"user_id": 5678,

"organization_id": 1234,

` "response_status": 200,`

"elapsed_msec": 250

}

We also removed any redundant messages. By the time we finished, each tag was unique, used in exactly one place. Given a tag, a developer can find the exact location where that log message was produced, which is easier and more consistent than trying to match up file names and line numbers.

Part 2: When did it happen?

Tags are always named in the past tense, like webhook_received or email_sent. This is important and reinforces another rule: a log message must be written after the event it describes. Why after? Because if it comes before, it doesn't really tell you anything!

In the conversational-style log, you can read a sequence of messages as a group: “About to do the thing,” followed by “Did the thing,” or sometimes, “Failed to do the thing.” But when you're processing millions of messages across dozens of machines, reassembling those sequences is expensive.

Furthermore, a log message like “About to do the thing” doesn't really convey any useful information. Maybe the process went on to do the thing, or maybe it died immediately after. Maybe it started to do the thing but didn’t finish. The presence of “About to do the thing” doesn’t prove that any of those scenarios did or didn’t occur.

By contrast, when a log message comes after the event, you know the operation completed, at least from the application's point of view. If the process completed an operation but died before it could log it, that’s unfortunate, but you still don't have any more or less information than you did if it had logged “About to do the thing.”

So our log messages are written after the event, in the past tense. For an interaction with an external service, we would usually have two tags, one for success and one for failure. For example, email_sent and email_failed. This makes it easy to answer both positive questions (“How many notifications did we send last week?”) and negative questions (“How many notifications did we miss?”) with a simple search.

Part 3: In what context?

We knew going in that every dimension we wanted to search on needed to be present in the logs, but it was still the area where we made the most mistakes. It’s hard to anticipate every use case upfront.

For example, Shortcut is a multi-tenant web application, so almost everything happens in the context of a customer Organization. We included an Organization ID in almost every message, but sometimes we forgot, or it wasn’t convenient at that point in the code. We needed it to be automatic based on the context of the current request.

For another example, originally our logs didn’t include a field for the deployment environment (development, staging, production). Why? Because when we started out, we were only collecting logs from production! When we added logging to other environments, we forgot to add a field to differentiate them. Although the volume from staging and development was tiny compared to production, it was still enough to add noise.

Lessons learned

We made other, more subtle mistakes in designing our logs. One of the worst was the decision to split information between api_request and api_response messages. Having two messages is not a problem in itself. Our mistake was that some fields, such as the URI, were only included in the api_request message and others were only in api_response. The split made some searches unnecessarily difficult. A better approach would have been to include all the significant details in api_response.

Another problem was the consistency of field names. We had no trouble remembering the standard fields that appear in every message. But for the less common fields, we didn’t have a fixed convention. Just to name one example, we had fields named email, email_to, address, and email_address.

Over time, we gradually fixed these problems. We’re still working on it, and we’re still learning.

In recent years, we’ve noticed more being written about the practices of logging and monitoring, often under the banner of Observability. Making systems more transparent and maintainable is an ongoing process in which we, as an industry, still have much to learn. Hopefully, our story here will be a small but useful contribution.

Coming up next: The tools and technology that went into our logging stack.

<!-- Code for CTA component starts here-->

<div class="post_body_cta"><div class="post_body_cta-heading">

Build what’s next

</div><div class="post_body_cta-text">

Experience the most lightweight, enjoyable way for your team to work.

</div><div class="common_buttons_wrap"><a href="/signup" class="button primary text-color-white w-button">Get Started - free forever</a>

<a href="https://api.app.shortcut.com/oauth/signup" class="button is-google w-button">Sign up with Google</a></div></div>

<!-- Code for CTA component ends here-->

.avif)

%20(788%20x%20492%20px)%20(1).png)

.png)